Scraping the Web with Beautiful Soup

Published by Arran Cardnell , Nov. 10, 2017

Requests Python Web Scraping BeautifulSoup

12 minute read 3 comments 4 likes 24511 views

Nowadays, there are APIs for nearly everything. If you wanted to build an app that told people the current weather in their area, you could find a weather API and use the data from the API to give users the latest forecast.

But what do you do when the website you want to use doesn't have an API? That's where Web Scraping comes in. Web pages are built using HTML to create structured documents, and these documents can be parsed using programming languages to gather the data you want.

Web Scraping with Python and Beautiful Soup

There are two basic steps to web scraping for getting the data you want:

- Load the web page (i.e. the HTML) into a string

- Parse the HTML string to find the bits you care about

Python provides two very powerful tools for doing both of these tasks. We can use the Requests library to retrieve the web page containing our data, and we can use the awesome Beautiful Soup package for parsing and extracting the data. If you'd like to know a bit more about the Requests library and how it works, check out this post for a bit more depth.

Using Beautiful Soup we can easily select any links, tables, lists or whatever else we require from a page with the libraries powerful built-in methods. So let's get started!

HTML basics

Before we get into the web scraping, it's important to understand how HTML is structured so we can appreciate how to extract data from it. The following is a simple example of a HTML page:

<!DOCTYPE html> <html> <body> <h1 id="page-header">Example Heading</h1> <p class="paragraph">Example paragraph</p> <a href="www.example.com">Example link</a> </body> </html>

HTML will always start with a type declaration of <!DOCTYPE html> and will be contained between <html> / </html> tags.

The <body> tags wrap around the visible part of a website, which is made up by various combinations of header tags (<h1> to <h6>), paragraphs (<p>), links (<a>) and several others not shown in this example, such as <input> and <table> tags.

HTML tags can also be given attributes, like the id and class attributes in the example above. These attributes can help with styling by uniquely identifying elements.

If these tags are new to you, it might be worth taking some time quickly getting up to speed with HTML. Codecademy and W3Schools both offer excellent introductions into HTML (and CSS) that will be more than enough for this tutorial.

Analyzing the HTML

Have you ever followed one of those links on your social media to a "Top 10 films of 2017", only to find it's one of those sites where each listing is on a different page? Part of you wants to find out what they thought was number one, the other part wants to give up waiting for all the ads to load? Well, web scrapping can help you with that.

We are going to use this article from CinemaBlend to find out the 10 Greatest Movies of All-Time.

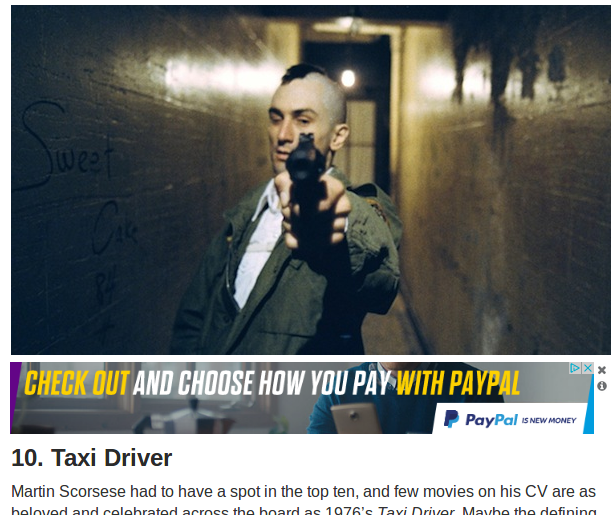

Take a look at the link. It should bring you to a page where you can see that Taxi Driver was ranked 10th in the list. We want to grab this, so the first thing we need to do is look at the page structure. Right click on the page in the link above, and select the Page Source option.

This will bring up the HTML document for the entire page, side-menus and all. Don't be alarmed, I don't expect you to read all that. Instead press Ctrl + F and search for 10. Taxi Driver.

You should find something like this:

<div class="liststyle"> 10. Taxi Driver </div> Martin Scorsese had to have a spot in the top ten [...]

This part of the HTML represents the rank and title found underneath the movie image as shown below:

The easiest way to be sure is that this search should return only 1 result, which means we must be looking at the same part of the page.

So the 10th entry in our list is Taxi Driver, but how do we get the other 9 without having to click through every page?

Open the page source again, but this time search for Continued On Next Page. You should find something like this:

<div class="nextpage"> <a class="next-story" href="https://www.cinemablend.com/new/10-Greatest-Movies-All-Time-According-Actors-73867.html?story_page=2" rel="next"> Continued On Next Page > </a> </div>

This section is rendered as the link we need to click on to see the next entry:

Again, we can tell this is the same element because it is the only result in the whole page source that should match.

Believe it or not, with just those two HTML segments we can create a Python script that will get us all the results from the article.

Scraping the HTML

Before we can write our scraping script, we need to install the necessary packages. Type the following into the console:

pip install requests pip install beautifulsoup4

Now we can write our web scraper. Create a script called scraper.py and open it in your development environment. We'll start by importing Requests and BeautifulSoup:

1 2 | import bs4 # BeautifulSoup import requests |

Let's use the Requests library to grab the page holding the 10. Taxi Driver entry and store it in a variable called page. We'll also create a variable called results, which will store the film rankings in a list for us:

3 4 5 | results = [] page = requests.get('https://www.cinemablend.com/new/10-Greatest-Movies-All-Time-According-Actors-73867.html') |

Do you remember when we looked at the HTML for the web article using page source? Essentially, we now have that page's HTML stored in our variable, and we're going to use BeautifulSoup to parse through the response to find the data we care about.

The next step is to feed page into BeautifulSoup:

6 | soup = bs4.BeautifulSoup(page.text) |

Now we can use the BeautifulSoup built-in methods to extract the film and it's ranking from the snippet we examined earlier:

<div class="liststyle"> 10. Taxi Driver </div> Martin Scorsese had to have a spot in the top ten [...]

To do this, we can use CSS selector syntax. In CSS, selectors are used to select elements for styling. Notice how the div element has a class of liststyle? We can use this to select the div tag, since a div tag with this exact class only appears once on the page.

Note: Usually,

classattributes aren't unique and are used to style multiple elements in a similar way. If you want to guarantee uniqueness, try to use anidattribute.

7 8 | element = soup.select('div.liststyle') movie = element[0].get_text() |

Here, we have used the BeautifulSoup select method to grab the div element we want. The select method returns a list containing any matching elements. In our case, element returns: [<div class="liststyle">10. Taxi Driver</div>].

Since our list only contains one item, we get the element with index 0. We then use the BeautifulSoup get_text method to return just the text inside the div element, which will give us '10. Taxi Driver'.

Finally, let's append the result to our results list:

9 | results.append(movie) |

Crawling the HTML

Another key part of web scraping is crawling. In fact, the terms web scraper and web crawler are used almost interchangeably; however, they are subtly different. A web crawler gets web pages, whereas a web scraper extracts data from web pages. The two are often used together, since usually when you crawl some web pages you also want to get some data from them, hence the confusion.

In order for us to determine the other 9 rankings in the article, we will need to crawl through the web pages to find them. To do that, we are going to use the snippet we discovered before:

<div class="nextpage"> <a class="next-story" href="https://www.cinemablend.com/new/10-Greatest-Movies-All-Time-According-Actors-73867.html?story_page=2" rel="next"> Continued On Next Page > </a> </div>

An <a> tag represents a link, and the destination for that link when clicking on it is held by the href attribute. We want to pass the value held by the href attribute to the Requests library, just like we did for the first page. We can do that with the following:

10 11 | link = soup.select('div.nextpage a.next-story') href = link[0].get('href') |

Here we have selected for any a tag that contains the class next-story and is within a parent div element that itself has a class of nextpage. This will return just a single result, since a link matching this criteria occurs just once on the page for our Continued On Next Page link.

We can then get the value of the href attribute by calling the get method on the a tag and storing it in a variable called url.

The next step would be to pass the href variable into the Requests library get method like we did at the beginning, but in order to do that we are going to need to refactor our code slightly to avoid repeating ourselves.

Refactoring the Scraper

Right now, our scraper successfully grabs our chosen page and extracts the movie title and ranking, but to do the same for the remaining pages we need to repeat the process without just duplicating our code. To do this we are going to use recursion.

Recursion involves coding a function that calls itself one or more times, something that Python is able to take advantage of very easily. Here is our scraper refactored as a recursive function:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 | import bs4 # BeautifulSoup import requests def scraper(url, results=None): if not results: results = [] page = requests.get(url) soup = bs4.BeautifulSoup(page.text, "html.parser") element = soup.select('div.liststyle') try: movie = element[0].get_text() results.append(movie) except IndexError: return 'No matching element found.' link = soup.select('div.nextpage a.next-story') try: href = link[0].get('href') scraper(href, results) except IndexError: return 'No matching element found.' return results |

Let's go through each section of the code and see what is happening.

5 6 7 8 9 | def scraper(url, results=None): if not results: results = [] page = requests.get(url) |

The scraper function takes two arguments. The first, url, is the URL of the page you want to extract information from, which gets passed into requests.

The second argument results is optional but is key to the operation of our recursive function. When the function is first called, it should be called as follows:

scraper('https://www.cinemablend.com/new/10-Greatest-Movies-All-Time-According-Actors-73867.html')

The results parameter is not provided, and thus is set to an empty list. The function then grabs the page and extracts the information from it, appending it to the results list.

The next vital part of our recursive function lies here:

20 21 22 23 24 25 26 | link = soup.select('div.nextpage a.next-story') try: href = link[0].get('href') scraper(href, results) except IndexError: return 'No matching element found.' |

If we find a link on the page matching the CSS selector div.nextpage a.next-story, then we will call the scraper function again, this time with the href of the link to the next page AND the results list we have generated so far. This means when scraper runs for any subsequent calls, the results parameter will not be empty and instead we will continue to append new results to it.

When the scraper reaches the last page of the article (i.e. the movie ranked number one), then there will be no link matching the CSS selector and our recursive function wil return the final results list.

Note: Take care when using recursion. If you don't create a condition that will eventually end the function calls, a recursive function will run continously until it causes a runtime error. This is to prevent an issue known as stack overflow.

A complete working script could look something like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 | import bs4 # BeautifulSoup import requests def scraper(url, results=None): if not results: results = [] page = requests.get(url) soup = bs4.BeautifulSoup(page.text, "html.parser") element = soup.select('div.liststyle') try: movie = element[0].get_text() results.append(movie) except IndexError: return 'No matching element found.' link = soup.select('div.nextpage a.next-story') try: href = link[0].get('href') scraper(href, results) except IndexError: return 'No matching element found.' return results if __name__ == '__main__': rankings = scraper('https://www.cinemablend.com/new/10-Greatest-Movies-All-Time-According-Actors-73867.html') print(rankings) |

Scraper limitations

So now you've seen how easily you can extract information from a web page, why wouldn't you use it all the time? Well, sadly, there are downsides.

For starters, web scraping can also be slower obtaining the information than through an equivalent API, and some sites don't like you scraping information from their pages, so you need to check their policies to see it's okay.

But perhaps the most significant drawback is changes to the the HTML page structure. One of the advantages of APIs is that they are designed with developers in mind, and are therefore less likely to changes how they work. Web pages on the other hand can change quite dramatically. If the web page author decides to change the class names of their elements, such as the nextpage and next-story CSS selectors we used, our scraper will break. This can be frustrating if a website updates regularly.

That being said, web sites have improved their structures a lot over the years with the popularity of many easy-to-use frameworks, which means pages are unlikely to change too much over time.

Summary

Hopefully you've seen enough that you can now use web scraping confidently in your own projects. The advantage of web scraping is that what you see is what you get and If you know the information you are after, you don't need to dig around trying to figure out an API to get it. Just code a simple scraper and it's yours!